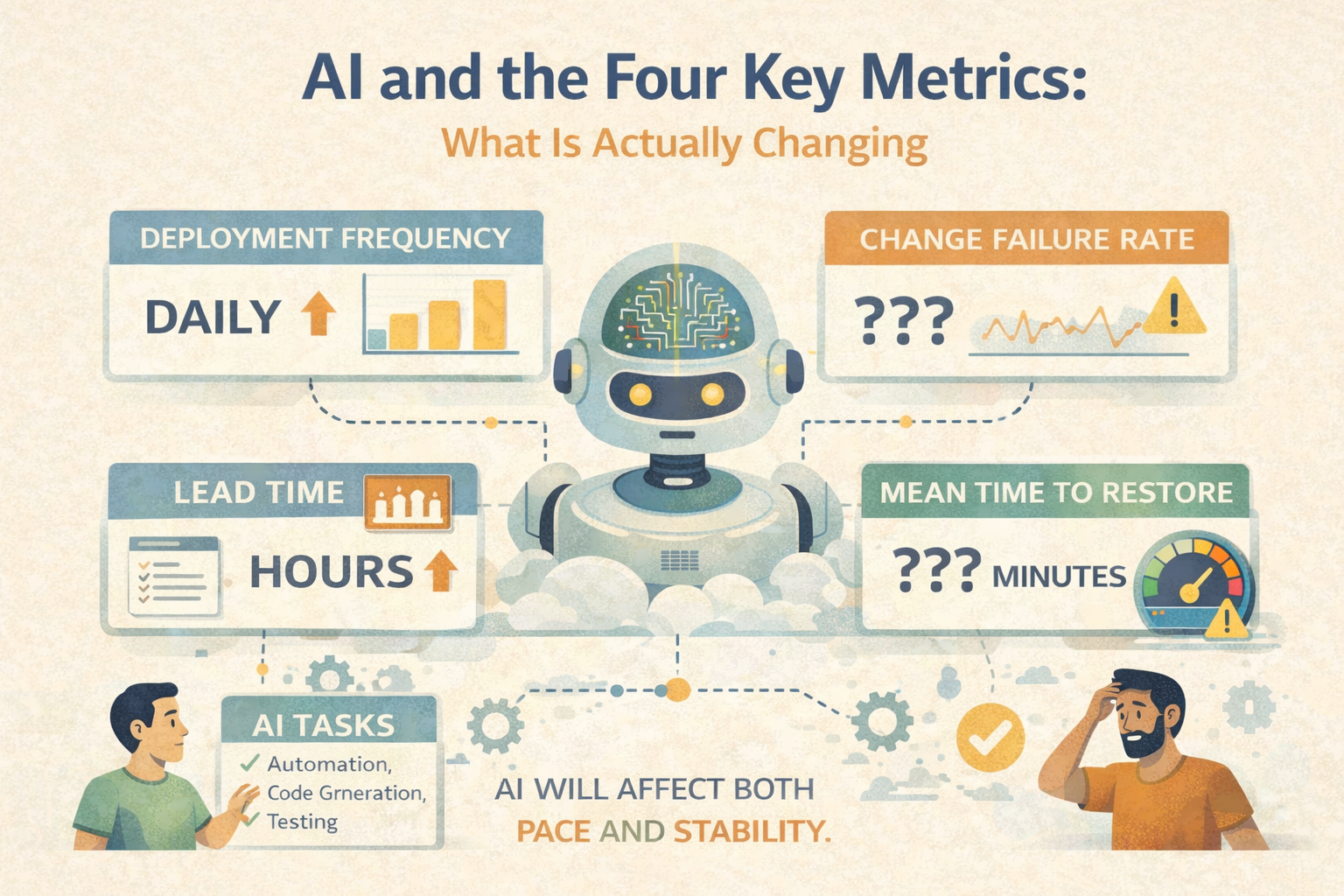

AI and the Four Key Metrics: What Is Actually Changing

The question I'm asked most often right now: how is AI changing software delivery performance?

It's a reasonable question. The tooling has matured significantly. Coding assistants are genuinely useful. Agentic workflows are beginning to handle real tasks. Leaders want to know whether their DORA metrics should be improving - and if not, why not.

Here's my honest assessment of where AI is and isn't making a material difference to the four key metrics.

Deployment Frequency: Faster Code, Same Pipeline

AI coding tools - GitHub Copilot, Cursor, and their equivalents - genuinely reduce the time it takes to write code. For well-understood tasks with clear requirements and good test coverage, they're materially faster. Some teams report 30-40% reductions in coding time for certain categories of work.

But Deployment Frequency is not limited by how fast you can write code.

In most organisations, coding is not the bottleneck. The bottleneck is the pipeline - the automated tests that take an hour, the code review process, the environment availability, the approval gates, the release coordination. AI doesn't touch any of those.

Worse: if AI makes code generation faster without the pipeline capacity to absorb it, you've moved the constraint without removing it. More code arrives at the review queue. The queue grows. Lead time increases even as coding velocity appears to improve.

Where AI does help: Reducing friction in writing tests alongside features (which shortens feedback loops and can genuinely improve DF over time), and in reducing the rework caused by unclear specifications.

Lead Time for Change: Dominated by Handoffs, Not Code

Lead Time for Change measures the time from code commit to running in production. In elite organisations, this is measured in minutes or hours. In most organisations, it is measured in days or weeks.

The dominant factor in long lead times is not code writing time. It is handoff time - the time work sits waiting between activities. Waiting for a code review. Waiting for an environment. Waiting for a security scan. Waiting for a change approval board. Waiting for coordination with another team.

AI reduces code writing time. It does not reduce handoff time.

There are emerging use cases where AI can help - automated PR summaries that make review faster, AI-assisted security scanning that catches issues earlier, automated documentation that reduces review back-and-forth. These are real but marginal. They nibble at the edges of a problem whose core is organisational, not technological.

The honest answer: If your Lead Time for Change is measured in weeks, AI will not fix it. The problem is your process, your architecture, or your governance - not the speed of code generation.

Change Failure Rate: The Quality Wildcard

This is where I have the most concern about the current wave of AI adoption.

Change Failure Rate measures the proportion of releases that cause a degraded outcome requiring a fix, rollback, or patch. In elite organisations, this sits below 5%. In medium and low performers, it can be 20-40%.

AI-generated code is not inherently higher quality than human-written code. Research from 2024-2025 consistently shows that AI coding tools produce code with similar defect rates to experienced human engineers - higher, in some studies, for complex or novel problems. The key qualifier in all of these studies: quality outcomes depend heavily on test coverage and review rigor.

Organisations with strong test automation validate AI-generated code as effectively as human-written code. Defects are caught early. CFR stays low or improves because development speed increases without sacrificing validation.

Organisations with weak test coverage are shipping AI-generated code with less scrutiny than they would apply to human-written code. The speed feels good. The increasing CFR comes later.

The risk: AI accelerates output. Without quality infrastructure to match that pace, it accelerates defect rates proportionally.

Mean Time to Recovery: AI's Best DORA Use Case

This is the metric where I see AI having the most immediate and genuine impact - and it's underappreciated.

MTTR measures how long it takes to recover from a failure. The activities involved - incident triage, root cause analysis, runbook execution, communication, fix and deploy - are exactly the kinds of tasks where AI assistance is genuinely transformative.

AI-assisted incident response tools can:

- Correlate signals across observability platforms at a speed no human can match

- Generate incident summaries for stakeholders in real time

- Identify similar past incidents and their resolutions

- Draft runbooks from historical incident data

- Suggest fixes based on error patterns

Organisations that have integrated AI into their incident response workflows are reporting meaningfully lower MTTRs - not because their systems fail less, but because they recover faster when they do.

This is the Safer and Sooner intersection in BVSSH terms. Faster recovery means lower blast radius. Lower blast radius means teams are more willing to deploy frequently. More frequent deployment means smaller changes. Smaller changes have lower failure rates. The virtuous cycle compounds.

The Honest Summary

AI is not, currently, transforming most organisations' DORA metrics. It is:

- Marginally improving Deployment Frequency for teams that were already capable

- Barely touching Lead Time for Change (the problem is process, not code speed)

- A genuine risk to Change Failure Rate in organisations with weak quality foundations

- A meaningful accelerant for MTTR in organisations that invest in AI-assisted observability

The organisations seeing the best results are those that had the fundamentals in place before AI arrived. The fundamentals - strong pipelines, good test automation, observable systems, low WIP, generative culture - remain the primary determinant of delivery performance.

AI is coming. It will matter more over time. But the organisations that get the most from it will be the ones that have already done the hard organisational and technical work. That work doesn't start with buying a tool. It starts with understanding your system.

Engineering leader blending strategy, culture, and craft to build high-performing teams and future-ready platforms. I drive transformation through autonomy, continuous improvement, and data-driven excellence - creating environments where people thrive, innovation flourishes, and outcomes matter. Passionate about empowering others and reshaping engineering for impact at scale. Let’s build better, together.